Enterprise Technology Specs

Interface Preview

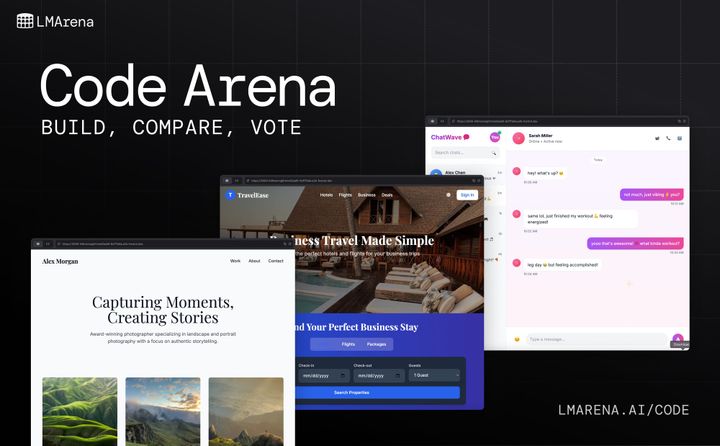

The Deep Dive

Arena feels a bit like having a testing lab for AI models.

Instead of guessing which model is better, you can actually compare responses side-by-side and see the difference immediately. That becomes incredibly useful once you start working seriously with prompts, automation, or AI products.

What makes Arena stand out is the speed of experimentation. You can test the same prompt across multiple models in seconds and quickly spot which one performs better for writing, coding, reasoning, or creativity.

It’s especially valuable for developers and AI teams trying to avoid expensive trial-and-error decisions.

That said, Arena is more about evaluation than creation. It won’t replace your main AI workspace, but it does make choosing the right model much easier,which is becoming more important as the AI ecosystem gets crowded.

Key Capabilities

Top Use Cases

- Comparing LLM outputs

- Prompt engineering workflows

- Benchmarking AI models

- Evaluating response quality

- Research and testing

- AI workflow optimization

“An AI startup reduced model testing time by 58% and improved prompt optimization workflows by comparing GPT-based and open-source models directly inside Arena.”